Introduction

In this vignette we demonstrate how to conduct a simulation study with minimal coding. We show how to do a structural evaluation of methods for clustering longitudinal data, for different specifications, and across various (synthetic) data scenarios.

A typical workflow in a simulation study involves:

There are many packages available in R which facilitate

such a workflow. In this vignette, we use the simTool

package.

Due to the relatively large number of models fitted, we will disable all model outputs:

Data generation

Using synthetic data allows us to investigate to performance of the methods under specific conditions of interest (e.g., sensitivity to noise, within-cluster variability, and cluster separation).

For demonstration purposes, we define a trivial dataset comprising two distinct groups with group trajectories represented by a line (i.e., intercept and slope). A trajectory 𝐲i belonging to group k is described by yij=β0k+β1ktj+z0i+eij. Here, β0k and β1k are the intercept and slope for group k, respectively. Furthermore, zi denotes the trajectory-specific random intercept, i.e., its deviation from the group trajectory. Lastly, eij represents independent random noise with eij∼N(0,σ2).

We can generate data according to this model using a utility function

named generateLongData() that is included in the package.

This function generates datasets based on a mixture of linear mixed

models. We create a wrapper around this function in order to adapt the

function to our needs. Most importantly, we code the shape and

coefficients of the group trajectories as fixed, setting

(β0A,β1A)=(0,0)

for group A (40%) and

(β0B,β1B)=(1,−1)

for group B (60%). Other data settings (e.g., the magnitude of

perturbation) are passed via ....

dataGen <- function(numTraj, ..., data.seed) {

latrend::generateLongData(

sizes = c(floor(numTraj * .4), ceiling(numTraj * .6)),

fixed = Y ~ 0,

cluster = ~ 1 + Time,

random = ~ 1,

id = "Traj",

clusterCoefs = cbind(c(0, 0), c(1, -1)),

seed = data.seed,

...

)

}Because the simTool package does not appear to support

overlapping names between data and method functions, we needed to rename

the seed argument of our underlying data generating

function.

Note that the generateLongData is included in the

latrend package primarily for demonstration purposes. For

generating data in a more flexible way, consider using the simstudy

package.

Now that we have defined a data generating function, we set the default trajectory id and time column names, so we do not have to specify this in any future calls.

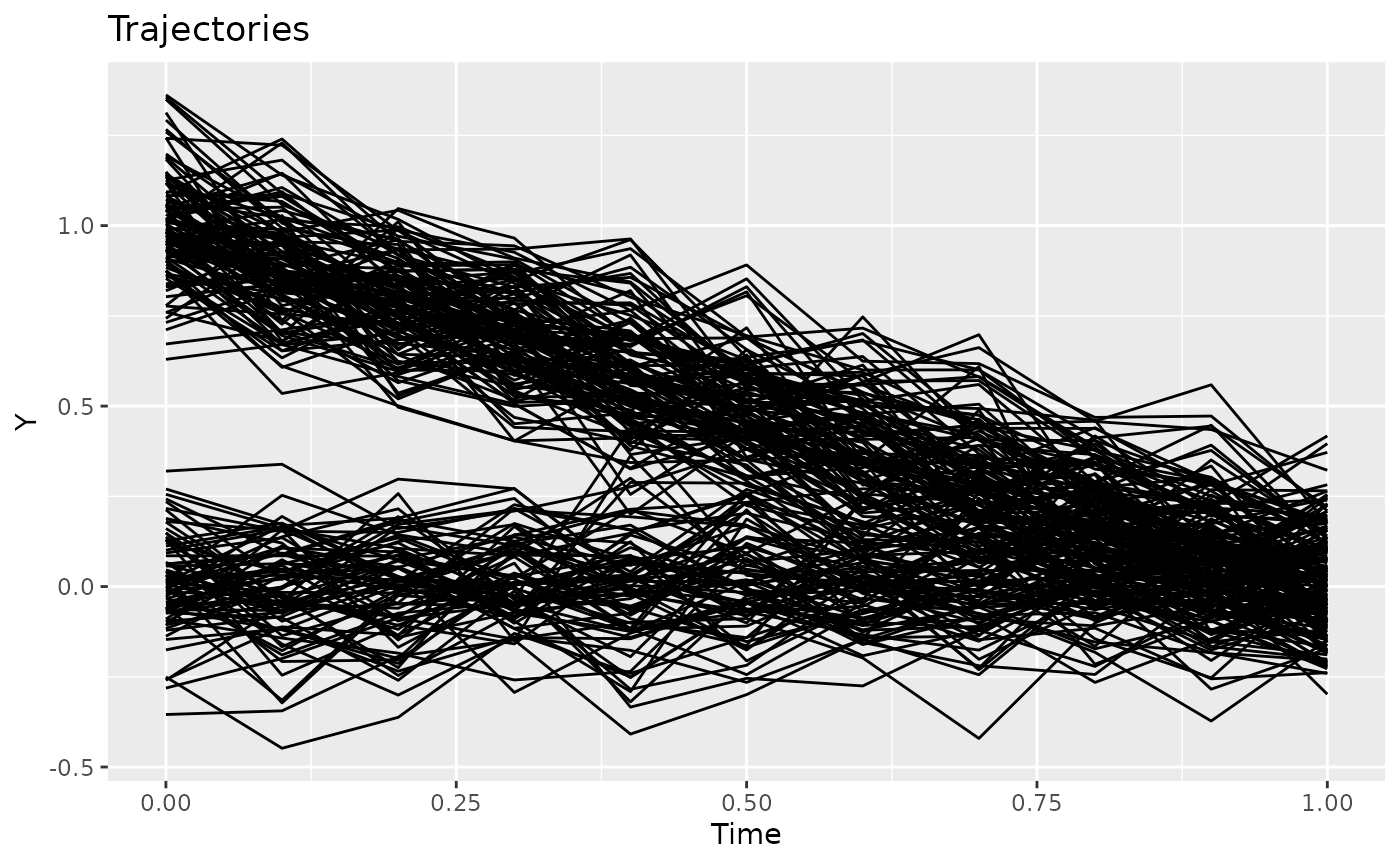

options(latrend.id = "Traj", latrend.time = "Time")It’s a good idea to inspect the data we are generating.

exampleData <- dataGen(numTraj = 200, randomScale = .1, data.seed = 1)

plotTrajectories(exampleData, response = "Y")

Data settings

We now specify the data settings of interest. In this example, we

evaluate the methods on datasets with varying sample size (50 and 250

trajectories) and random intercept scale (small and large random

intercept). Moreover, we evaluate methods repeatedly under these

settings by specifying different values for data.seed,

generating a slightly different dataset for each seed.

As we intend to evaluate the methods on each combination of data

settings, we need to generate a table of all permutations. This can be

done using the expand.grid() function, or using

expand_tibble().

dataGrid <- simTool::expand_tibble(

fun = "dataGen",

numTraj = c(50, 250),

randomScales = c(.1, .5),

data.seed = 1:2

)

head(dataGrid)

#> # A tibble: 6 × 4

#> fun numTraj randomScales data.seed

#> <chr> <dbl> <dbl> <int>

#> 1 dataGen 50 0.1 1

#> 2 dataGen 250 0.1 1

#> 3 dataGen 50 0.5 1

#> 4 dataGen 250 0.5 1

#> 5 dataGen 50 0.1 2

#> 6 dataGen 250 0.1 2Method settings

Similarly to the data settings table, we specify a table of all

permutations for the method settings. Typically this is done separately

for each method, as their settings will usually differ. In this example

we evaluate KmL and GCKM only on differing number of clusters so the

method settings can be jointly generated. Repeated method evaluation is

achieved through specifying several values for the seed

argument.

The method evaluation function (specified by the proc

field) here is simply the latrend() function, which will

fit the specified method type to the generated data. ## Specfying the

KmL method

kmlMethodGrid <- simTool::expand_tibble(

proc = "latrend",

method = "lcMethodKML",

nClusters = 1:2,

seed = 1,

response = "Y"

)

head(kmlMethodGrid)

#> # A tibble: 2 × 5

#> proc method nClusters seed response

#> <chr> <chr> <int> <dbl> <chr>

#> 1 latrend lcMethodKML 1 1 Y

#> 2 latrend lcMethodKML 2 1 YSpecifying the GCKM method

Parametric models such as GCKM are more unwieldy to specify in a simulation study due to the need to define the parametric shape through one or more formulas. Formulas are tedious to query or filter in a post-hoc simulation analysis1.

We can solve this by defining simple keywords representing the

different parametric shapes of interest. We then specify a wrapper

function for latrend() that sets the correct

formula argument depending on the keyword.

fitGCKM <- function(type, ...) {

form <- switch(type,

constant = Y ~ Time + (1 | Traj),

linear = Y ~ Time + (Time | Traj)

)

latrend(..., formula = form)

}We can then specify our GCKM method settings in a similar way as we

did for the KmL method, but with the proc argument set to

the fitGCKM function we have just defined.

gckmMethodGrid <- simTool::expand_tibble(

proc = "fitGCKM",

method = "lcMethodGCKM",

type = c("constant", "linear"),

nClusters = 1:2,

seed = 1

)Finally, we combine our method-specific permutation grids into one

large grid. By using the bind_rows() function, mismatches

in the columns between the grids are handled properly.

methodGrid <- dplyr::bind_rows(kmlMethodGrid, gckmMethodGrid)

head(methodGrid)

#> # A tibble: 6 × 6

#> proc method nClusters seed response type

#> <chr> <chr> <int> <dbl> <chr> <chr>

#> 1 latrend lcMethodKML 1 1 Y NA

#> 2 latrend lcMethodKML 2 1 Y NA

#> 3 fitGCKM lcMethodGCKM 1 1 NA constant

#> 4 fitGCKM lcMethodGCKM 1 1 NA linear

#> 5 fitGCKM lcMethodGCKM 2 1 NA constant

#> 6 fitGCKM lcMethodGCKM 2 1 NA linearEvaluating the settings

The eval_tibbles() function takes the data and method

grids as inputs, and runs the method estimation as intended for each

simulation setting. In practice, it is advisable to run evaluations in

parallel as the number of simulation settings is likely much greater

than in this trivial demonstration.

Before we run the simulations, we first want to define a function for

computing our model performance metrics. This function will be run by

eval_tibbles() for every estimated model. The details on

what we are computing here is explained further in the next section.

analyzeModel <- function(model) {

data <- model.data(model)

refModel <- lcModelPartition(data, response = "Y", trajectoryAssignments = "Class")

tibble::tibble(

BIC = BIC(model),

APPA = metric(model, "APPA.min"),

WMAE = metric(model, "WMAE"),

ARI = externalMetric(model, refModel, "adjustedRand")

)

}At last, we can run the simulations and post-hoc summary computations:

result <- simTool::eval_tibbles(

data_grid = dataGrid,

proc_grid = methodGrid,

post_analyze = analyzeModel

)The result table contains a results column

containing the fitted models.

result

#> # A tibble: 48 × 15

#> fun numTraj randomScales data.seed replications proc method nClusters

#> <chr> <dbl> <dbl> <int> <int> <chr> <chr> <int>

#> 1 dataGen 50 0.1 1 1 latrend lcMeth… 1

#> 2 dataGen 50 0.1 1 1 latrend lcMeth… 2

#> 3 dataGen 50 0.1 1 1 fitGCKM lcMeth… 1

#> 4 dataGen 50 0.1 1 1 fitGCKM lcMeth… 1

#> 5 dataGen 50 0.1 1 1 fitGCKM lcMeth… 2

#> 6 dataGen 50 0.1 1 1 fitGCKM lcMeth… 2

#> 7 dataGen 250 0.1 1 1 latrend lcMeth… 1

#> 8 dataGen 250 0.1 1 1 latrend lcMeth… 2

#> 9 dataGen 250 0.1 1 1 fitGCKM lcMeth… 1

#> 10 dataGen 250 0.1 1 1 fitGCKM lcMeth… 1

#> # ℹ 38 more rows

#> # ℹ 7 more variables: seed <dbl>, response <chr>, type <chr>, BIC <dbl>,

#> # APPA <dbl>, WMAE <dbl>, ARI <dbl>

#> Number of data generating functions: 8

#> Number of analyzing procedures: 6

#> Number of replications: 1

#> Estimated replications per hour: 252

#> Start of the simulation: 2025-01-06 18:21:31.095643

#> End of the simulation: 2025-01-06 18:21:45.35592Analyzing the results

We can now analyze the computed results. We use the data.table package to handle the filtering and aggregation of the results table.

library(data.table)

resultsTable <- as.data.table(result$simulation)Recovery of the number of clusters

Often, researchers are interested in estimating the number of clusters by means of minimizing a metric indicating either model fit, cluster separation, or another factor that helps to determine the preferred number of clusters. Evaluating how many times the correct number of clusters is obtained from a cluster metric can help to decide which metric to use, and which selection approach to take.

In this example, we evaluate the use of the Bayesian information criterion (BIC). For each data scenario, we identify the cluster solution that minimizes the BIC.

resultsTable[, .(K = nClusters[which.min(BIC)]), keyby = .(numTraj, randomScales, data.seed, method)]

#> Key: <numTraj, randomScales, data.seed, method>

#> numTraj randomScales data.seed method K

#> <num> <num> <int> <char> <int>

#> 1: 50 0.1 1 lcMethodGCKM 2

#> 2: 50 0.1 1 lcMethodKML 2

#> 3: 50 0.1 2 lcMethodGCKM 2

#> 4: 50 0.1 2 lcMethodKML 2

#> 5: 50 0.5 1 lcMethodGCKM 2

#> 6: 50 0.5 1 lcMethodKML 2

#> 7: 50 0.5 2 lcMethodGCKM 2

#> 8: 50 0.5 2 lcMethodKML 2

#> 9: 250 0.1 1 lcMethodGCKM 2

#> 10: 250 0.1 1 lcMethodKML 2

#> 11: 250 0.1 2 lcMethodGCKM 2

#> 12: 250 0.1 2 lcMethodKML 2

#> 13: 250 0.5 1 lcMethodGCKM 2

#> 14: 250 0.5 1 lcMethodKML 2

#> 15: 250 0.5 2 lcMethodGCKM 2

#> 16: 250 0.5 2 lcMethodKML 2Column K of the table shows the selected number of clusters for each scenario. This shows that estimating the number of clusters by minimizing the BIC results in a consistent overestimation of the number of clusters in our datasets. As an alternative to minimizing the BIC, we could consider using the elbow method instead. However, in order to conclude whether that is a feasible approach to our data would require more simulations, across a greater number of cluster.

Trajectory assignment agreement

Another aspect of interest might be the ability of the cluster model to identify the correct cluster assignment for each of the trajectories. An intuitive metric for assessing this is the adjusted Rand index (ARI). This metric measures the agreement between two partitionings, where a score of 1 indicate a perfect agreement, and a score of 0 indicates an agreement no better than by chance.

We compute the average ARI per data scenario and method to identify in which scenarios the methods were able to recover the reference cluster assignments.

resultsTable[nClusters > 1, .(ARI = mean(ARI)), keyby = .(nClusters, numTraj, randomScales, method)]

#> Key: <nClusters, numTraj, randomScales, method>

#> nClusters numTraj randomScales method ARI

#> <int> <num> <num> <char> <num>

#> 1: 2 50 0.1 lcMethodGCKM 1.0000000

#> 2: 2 50 0.1 lcMethodKML 1.0000000

#> 3: 2 50 0.5 lcMethodGCKM 0.4740203

#> 4: 2 50 0.5 lcMethodKML 0.1374062

#> 5: 2 250 0.1 lcMethodGCKM 0.9880822

#> 6: 2 250 0.1 lcMethodKML 1.0000000

#> 7: 2 250 0.5 lcMethodGCKM 0.4521689

#> 8: 2 250 0.5 lcMethodKML 0.1323025The trajectory assignment recovery is affected the most by the magnitude of the random scale (i.e., the amount of overlap between the clusters). Moreover, it is apparent that KmL performs much worse under high random scale than GCKM.

resultsTable[nClusters > 1, .(ARI = mean(ARI)), keyby = .(randomScales, nClusters, method)]

#> Key: <randomScales, nClusters, method>

#> randomScales nClusters method ARI

#> <num> <int> <char> <num>

#> 1: 0.1 2 lcMethodGCKM 0.9940411

#> 2: 0.1 2 lcMethodKML 1.0000000

#> 3: 0.5 2 lcMethodGCKM 0.4630946

#> 4: 0.5 2 lcMethodKML 0.1348544Residual error

Another aspect of interest might be the residual error, i.e., how well our cluster model describes the data. Here, we gauge this by computing the mean absolute error, weighted by the posterior probabilities2.

Both methods achieve practically the same mean residual error. Another sign of the data comprising two clusters is that the MAE drops down significantly for the two-cluster solution, but hardly any improvement is gained for the three-cluster solution.

resultsTable[randomScales == .1, .(WMAE = mean(WMAE)), keyby = .(nClusters, method)]

#> Key: <nClusters, method>

#> nClusters method WMAE

#> <int> <char> <num>

#> 1: 1 lcMethodGCKM 0.2627083

#> 2: 1 lcMethodKML 0.2627083

#> 3: 2 lcMethodGCKM 0.1120481

#> 4: 2 lcMethodKML 0.1119268As one would expect, the residual error is strongly affected by the magnitude of the random scale.

resultsTable[, .(WMAE = mean(WMAE)), keyby = .(randomScales, nClusters)]

#> Key: <randomScales, nClusters>

#> randomScales nClusters WMAE

#> <num> <int> <num>

#> 1: 0.1 1 0.2627083

#> 2: 0.1 2 0.1120077

#> 3: 0.5 1 0.4563443

#> 4: 0.5 2 0.3257807Another reason to avoid defining formulas directly in the permutation grid is due to the way

data.frameand tibbles handle columns containingformula, returning alistinstead of theformulaelement when querying a single row. This results in an invalid method specification wheneval_tibbles()calls theprocargument using this output.↩︎For KmL and GCKM, the WMAE is effectively the same as the MAE.↩︎